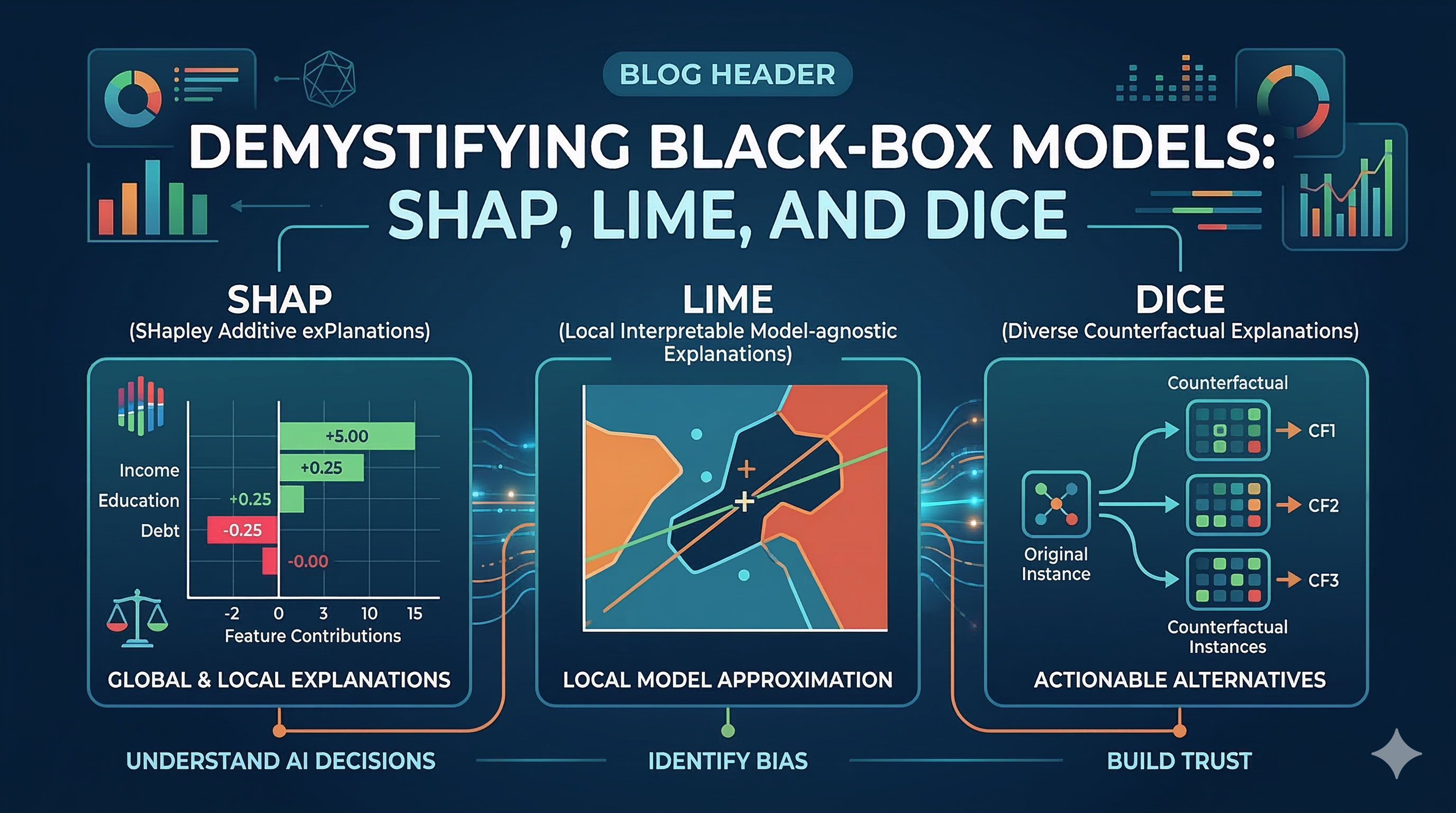

Explainable AI (XAI): SHAP, LIME, and DiCE

KEYWORDS Explainable AI, XAI, SHAP, LIME, DiCE, Model Interpretability, Machine Learning, Black Box Models

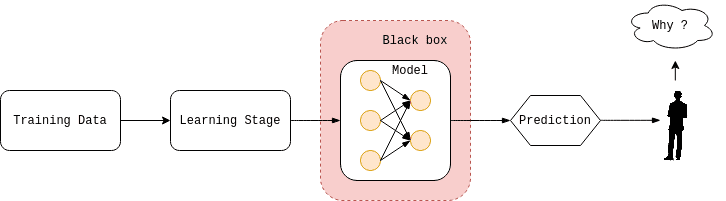

The Black Box Problem

Source: The AI Summer – Explainable AI (XAI): A survey of recent methods, applications and frameworks

As AI models grow more complex, the “Black Box” problem problem has turned into a significant challenge.

How can we trust a model if we don’t know why it made a certain decision?

A model that cannot be explained cannot be fully trusted.

This is where Explainable AI (XAI) plays a role. XAI helps us understand how machine learning models make decisions and offers transparency for complicated systems.

Explainability is especially important in high-stakes areas such as finance, healthcare, and autonomous systems.

Today, let’s explore three powerful tools for interpreting ML models:

- SHAP

- LIME

- DiCE

Why Explainable AI Matters

Modern machine learning models such as deep neural networks, gradient boosting models, and large language models often achieve impressive accuracy. However, understanding how they make decisions can be very challenging.

This raises important questions:

- Can we trust the model?

- Is the model biased?

- How can we debug incorrect predictions?

- How do we ensure fairness in sensitive applications like finance or healthcare?

Without interpretability, even a highly accurate model can become risky in real-world applications.

Explainable AI attempts to bridge this gap between model performance and human understanding.

1. SHAP (SHapley Additive exPlanations)

“The Fair Share Allocator”

SHAP is based on Shapley values from game theory, a concept used to fairly distribute rewards among players in a cooperative game.

In machine learning, each feature is treated as a player that contributes to the final prediction. SHAP calculates how much each feature added to the model’s output.

Think of SHAP as a fair credit assignment system for model predictions.

Example

Imagine a model predicting loan default risk. SHAP might show contributions like this:

| Feature | Contribution |

|---|---|

| Income | -0.30 |

| Credit History | -0.42 |

| Debt Ratio | +0.55 |

This means:

- Debt ratio increased risk

- Income and credit history reduced risk

Key Strengths

- Mathematical consistency

- Strong theoretical foundation

- Works for both global explanations and local predictions

Best Use Case

Understanding feature importance and why a specific prediction occurred.

2. LIME (Local Interpretable Model-agnostic Explanations)

“The Local Translator”

LIME focuses on explaining one prediction at a time rather than interpreting the entire model.

It works by:

- Slightly perturbing the input data

- Observing how predictions change

- Training a simple interpretable model (such as linear regression) around that local region

This creates an approximation of the complex model near the selected data point.

LIME acts like a translator that explains a complex model in simple terms.

Example

If an image classifier predicts “cat”, LIME may highlight the specific regions of the image that influenced the prediction.

For text classification, LIME can highlight important words that contributed to the model’s decision.

Key Strengths

- Model-agnostic (works with any ML model)

- Fast and easy to apply

- Useful for debugging individual predictions

Best Use Case

Explaining single predictions quickly.

3. DiCE (Diverse Counterfactual Explanations)

“The ‘What-If’ Explorer”

Unlike SHAP or LIME, DiCE focuses on counterfactual explanations.

Instead of explaining why a prediction happened, DiCE answers the question:

“What would need to change to obtain a different outcome?”

Example

Loan approval scenario:

Your loan application was rejected.

DiCE might provide explanations such as:

- If your income increased by $5,000

- Or if your loan amount decreased by $2,000

then the model would likely approve the loan.

Counterfactual explanations are especially useful because they provide actionable insights for users.

Key Strengths

- Provides practical recommendations

- Supports multiple counterfactual scenarios

- Helpful for decision-support systems

Best Use Case

Providing user-facing explanations and actionable guidance.

Comparison: Which one should you use?

| Tool | Approach | Explanation Type | Situation |

|---|---|---|---|

| SHAP | Game Theory | Feature Attribution | Understanding feature importance |

| LIME | Local Surrogate Model | Feature Attribution | Explaining a single prediction |

| DiCE | Counterfactual Analysis | “What-if” Scenarios | Providing actionable recommendations |

Conclusion

Understanding these techniques is crucial for building trustworthy AI systems.

Each method provides a different perspective:

- SHAP offers mathematically grounded feature attribution.

- LIME provides fast local explanations.

- DiCE delivers actionable counterfactual insights.

In practice, combining multiple XAI techniques often leads to the most complete understanding of model behavior.

As machine learning systems increasingly influence real-world decisions, interpretability will become just as important as model accuracy.